Project Methods

Executive Summary

Congress faces a persistent information gap. The qualitative, ground-level knowledge that legislators need to write effective policy and conduct meaningful oversight — the kind carried by the people who actually implement programs and live with their consequences — rarely reaches Capitol Hill through existing channels. Congress lacks systematic tools for soliciting this input, and the practitioners who hold it lack structured ways to provide it.

In 2025, the departure of thousands of career federal employees created both an urgent need and a rare opportunity to test whether that gap could be narrowed. POPVOX Foundation launched Departure Dialogues as a proof of concept: a lightweight, replicable process for capturing qualitative stakeholder input at scale in a format useful to Congress. This paper documents the methodology — how the project was designed, what tools and partnerships it relied on, what worked, what required adaptation, and what the experience reveals for future efforts. Substantive findings are presented separately in a companion report.

The model combined four elements: a coalition of aligned nonpartisan organizations that provided reach and credibility; asynchronous, low-friction participation channels designed to meet participants where they were; clear choices around attribution that allowed participants to make informed decisions about anonymity; and AI-assisted qualitative analysis through Talk to the City (T3C), which enabled a small team to surface thematic patterns across hundreds of open-ended responses without dedicated research staff.

Key lessons for future implementers:

Coalition networks are the binding constraint — and the trust mechanism. Build distribution partnerships with organizations your target population already trusts before launching data collection.

Demand is not the bottleneck. Participants engaged readily and substantively. The harder design problems are accessibility, trust, and privacy — not recruitment.

Asynchronous formats scale; interactive formats add depth. Use both deliberately, and sequence them: broad collection first, then targeted depth.

AI-assisted analysis makes the model viable for small teams. Without it, qualitative synthesis at this scale would require dedicated research capacity that most Congressional offices do not have.

The model fits some stages of the policy cycle better than others. It is strongest for agenda setting, implementation feedback, and reauthorization — stages where early signals and practitioner perspective have the highest marginal value.

Departure Dialogues demonstrated that the technical and operational barriers to this kind of work are lower than commonly assumed. The remaining challenges are institutional: questions of verification, authority, and how Congress integrates qualitative input into processes built around testimony and written records. This paper is offered as a practical resource for offices, committees, and organizations ready to begin that experimentation.

Table of Contents

How It Worked: Narrative Timeline

Observations & Lessons for Future Implementers

Introduction

Democratic institutions face a challenge that has only grown more acute in recent years: the world changes faster than Congress is able to keep up. This is what scholars and practitioners have come to call the “pacing problem” — the widening gap between the pace of technological, economic, and social change and the capacity of legislatures to understand, respond to, and regulate it.

The pacing problem has many dimensions, but one of the most underappreciated is informational. Effective legislation and oversight require Congress to solicit and process large amounts of qualitative, ground-level knowledge — what might in other contexts be called “intelligence.”

Congress has, of course, developed institutions and channels for gathering information. The Congressional Research Service and Government Accountability Office produce rigorous analytical work. Committee hearings bring in expert witnesses. Interest groups and advocacy organizations supply research, policy proposals, and constituent stories. Whistleblowers occasionally surface critical information about agency dysfunction. And constituent engagement — casework, town halls, correspondence — generates an enormous volume of contact between offices and the public.

But each of these channels has significant limitations, and together they leave substantial gaps in what Congress actually knows. CRS and GAO produce high-quality work, but their reports are necessarily retrospective, often broad in scope, and constrained by the questions members think to ask. Committee hearings are performative as often as they are informative, structured around prepared testimony rather than open-ended inquiry. Academic engagement with Congress is sporadic and often poorly matched to legislative timelines. And while interest groups are sophisticated at packaging information for legislative consumption, they are — by definition — advancing a particular agenda; the information they provide is selected and framed accordingly.

Constituent engagement, meanwhile, generates perhaps the richest raw material of any of these channels, but Congress has invested remarkably little in its capacity to actually learn from it. The tools and workflows that govern constituent contact — CRM systems, form letter templates, case tracking databases — are overwhelmingly designed around responsiveness and service delivery, not insight generation. Offices track volume, response times, and issue categories, because those are the things that are easy to count. The actual substance of what constituents are communicating — the patterns, the pain points, the implementation failures that show up again and again — is largely left to the memory and intuition of individual staff.

This is a specific instance of a broader problem. As qualitative researcher Chelsea Mauldin has argued, there is a persistent bias in policy institutions toward quantitative metrics — not because they are more meaningful, but because they are easier to produce and process. Much of what policy programs are designed to achieve — safety, security, wellbeing, human flourishing — resists meaningful quantification, because these are fundamentally subjective experiences that vary across individuals, communities, and contexts. Flattening that complexity into numerical aggregates can create the illusion of understanding while obscuring precisely the contextual, experiential knowledge that would make policy more effective. Survey data can tell you what people think; it cannot tell you why, or illuminate the motivations, implicit values, and lived circumstances that shape their relationship to a policy or program. That deeper layer — the layer where genuine insight lives — requires qualitative methods: open-ended engagement, careful listening, and the analytical work of identifying patterns across individual stories.

This matters for Congress because qualitative intelligence is not merely useful at one point in the legislative process — it is valuable across the entire policy cycle. At the agenda-setting stage, it reveals what problems actually look like on the ground, surfacing needs and failures that may not yet be visible in administrative data or advocacy campaigns. During policy formulation, it provides a reality check against proposals that may look sound on paper but collide with the complexity of implementation. It can generate democratic authorization for action — there is a difference between citing a statistic and conveying, with specificity and texture, what a policy failure means in the lives of the people affected by it. During implementation, qualitative insight from practitioners and service recipients can identify breakdowns, workarounds, and unintended consequences far earlier than formal evaluation cycles. In oversight, it provides the granular, ground-level perspective that complements inspector general reports and agency testimony. And even in the underappreciated work of policy decommissioning — winding down programs that no longer serve their intended purpose — qualitative engagement can help Congress understand how to manage transitions without causing disruption to the people who depend on existing systems.

Yet Congress currently has no systematic way to gather this kind of ground-level intelligence at scale. Instead, it largely sits passively, waiting for information to come to it through whatever channels happen to be available — channels that are shaped more by who has the resources and sophistication to navigate the legislative process than by who has the most relevant knowledge to contribute.

This arrangement has a predictable bias. It privileges those with the know-how to find and exploit leverage points in the legislative process — well-resourced interests, established organizations, and insiders with strong relationships on Capitol Hill. It systematically underweights the perspectives of the practitioners, frontline workers, small businesses, and ordinary constituents who lack those connections but often have the most direct experience with how policies actually function.

There is no shortage of dedicated, knowledgeable Americans who would gladly contribute to these processes if asked. The problem is that Congress rarely asks, and rarely has the capacity to process the answers even when it does.

This is beginning to change. New technology opens up a range of tools, methodologies, and possibilities for Congress to actively solicit and analyze qualitative input related to policy and oversight in something close to real time. AI-assisted analysis tools can now surface patterns across hundreds of open-ended responses in hours rather than weeks. Asynchronous, mobile-friendly platforms can reach participants who could never make it to a formal hearing. Coalition networks can amplify outreach to populations that have historically been hard to reach. Many open questions remain about how Congress as an institution should think about integrating this kind of information into its policymaking processes — questions of verification, authority, and institutional culture that no tool can resolve on its own. But the technology is rapidly lowering the capacity-side barriers in ways that make experimentation increasingly worthwhile.

The Opportunity

In the early months of 2025, a specific opportunity came into focus. The federal workforce transitions of 2025 — driven by the Department of Government Efficiency (DOGE), Reductions in Force (RIFs), and Deferred Resignation Programs (DRPs) — resulted in the departure of a large number of career civil servants across agencies, many with deep programmatic expertise accumulated over decades. Unlike typical individual retirements, this was a concentrated, simultaneous exit that made systematic outreach to a broad population of departing employees briefly feasible.

These departing employees were walking out the door carrying irreplaceable institutional knowledge: what works in the programs they ran, what legislative language creates unnecessary friction, what reforms could make a real difference, and what Congress does not understand about how its own laws actually function. That knowledge had a short half-life. Once these employees dispersed and moved on, it would become exponentially harder to capture.

The project did not emerge from a standing start. POPVOX Foundation and its coalition partners had spent years working at the seams between Congress and the executive branch, accumulating a body of experience that made the value of this kind of knowledge capture immediately legible — and the urgency of acting on it unmistakable.

In 2024, POPVOX Foundation partnered with Georgetown University’s Massive Data Institute on the Interbranch Exchange, a project focused on strengthening informal connections between legislative staff, executive branch employees, civil society organizations, and academic researchers. The premise was deceptively simple — that some of the most consequential gaps in how Congress understands federal programs are not analytical but relational, and that creating low-barrier opportunities for cross-branch conversation could surface knowledge that formal channels consistently miss.

Alongside that work, POPVOX Foundation’s ongoing engagement with congressional caseworkers and agency legislative liaison staff had built a detailed picture of the friction points that emerge when legislation meets implementation. Caseworkers navigate these collisions daily: the programs with eligibility rules that contradict each other, the legislative language that makes sense on paper but creates impossible administrative burdens, the edge cases that no one anticipated but that real people fall into constantly. These are not abstract policy problems — they are specific, concrete breakdowns that the people closest to program delivery can identify with precision, but that rarely travel back up to the legislators who could fix them.

Conversations with former executive branch staff reinforced the pattern from the other direction. A recurring theme across these interactions was frustration with the barriers to getting operational knowledge to Congress: legislative affairs offices that filtered or blocked candid communication, congressionally mandated reports that had become compliance exercises rather than genuine feedback mechanisms, and a pervasive sense that Congress did not understand — and had limited means of learning — how its own laws actually functioned once they left the statute books and entered the world of implementation. The sentiment “why doesn’t Congress understand that...” came up with striking regularity, paired with an equally striking lack of any obvious channel through which that understanding could have been transmitted.

More broadly, a growing body of work on state capacity — from scholars, practitioners, and reform-oriented organizations — had sharpened attention to the consequences of poor interbranch communication. When Congress cannot effectively learn from the executive branch’s implementation experience, it legislates with incomplete information, creating downstream problems that compound over time: sedimented layers of contradictory requirements, programs that are technically authorized but operationally incoherent, and a steady erosion of the government’s ability to deliver on its own commitments. These are not just bureaucratic inconveniences — they are failures of democratic governance, because they degrade the public’s experience of the programs that Congress created on their behalf.

All of this prior work pointed toward a clear gap: Congress needed better mechanisms for capturing candid, ground-level intelligence from the people who implement federal programs, and those people needed a safe, structured way to share what they knew. The 2025 workforce transitions did not create that need — they made it urgent and, briefly, uniquely addressable.

POPVOX Foundation and coalition partners launched Departure Dialogues to seize that window. Each partner brought distinct and complementary expertise to the effort. The Partnership for Public Service — the preeminent nonpartisan organization dedicated to the federal workforce, with over two decades of experience championing civil servants and producing authoritative research on federal employee engagement and government effectiveness — provided deep institutional knowledge of the workforce landscape and critical distribution infrastructure for reaching departing employees. The Niskanen Center, one of the leading voices on state capacity and the importance of closing the loop between policy design and implementation, brought an analytical framework that situated the project within the broader challenge of building a government capable of delivering on its own promises. The Foundation for American Innovation, whose work spans governance, technology, and institutional reform, contributed expertise on the conditions that produce effective government and the tools that can support it. And Civil Service Strong — launched through Democracy Forward in late 2024 specifically to support federal employees navigating the upheaval of the workforce transitions — brought direct relationships with the departing workforce, an active fellowship program engaging former civil servants as researchers and advocates, and a community infrastructure that made outreach to potential participants far more feasible than it would otherwise have been.

Departing employees, no longer constrained by the obligations and political sensitivities of active federal employment, had both the freedom and — for many — the motivation to share their knowledge frankly. But the challenge was significant. This population was distributed across every region of the country, navigating considerable personal and professional disruption, and individual departing employees likely had only tangential relationships with members of the Departure Dialogues coalition. The political environment required careful attention to privacy, anonymity, and institutional neutrality throughout.

The project was designed from the start as a proof of concept: a test of whether a small, resource-constrained team could build a lightweight, replicable model for capturing qualitative stakeholder input and making it useful to Congress. This paper documents the methodology behind that effort — the decisions made, the tools chosen, what would be done differently, and what the model can teach institutions considering similar approaches. The specific findings from Departure Dialogues are addressed in a companion findings paper; the focus here is the process itself.

Project Design

If Departure Dialogues is meant to demonstrate a replicable model, the constraints under which it was built matter as much as the methods themselves. POPVOX Foundation is not a Congressional office or committee. But the operational constraints the team faced closely parallel those of one¹— which is precisely what made it a useful test case.

Low budget.

No dedicated funding was raised for this project. Each coalition partner contributed staff time, intellectual input, and access to their networks, but there was no project-specific budget line. Every tool choice and design decision had to work within existing organizational resources. This constraint is directly analogous to a Congressional office or committee that operates on a fixed budget and may need to stand up a public input process quickly in response to rapidly evolving circumstances — without the luxury of a procurement cycle or dedicated appropriation.

Low headcount.

No additional staff were brought on board. The project was managed within POPVOX Foundation’s existing team of roughly ten people, all of whom were simultaneously managing other workstreams. Coalition partners contributed in the same mode — carving out time from existing portfolios rather than assigning dedicated personnel. A Congressional office running a similar effort would face the same reality: generalist staff fitting a new initiative around everything else on their plates.

Generalist expertise.

The POPVOX Foundation team knows government — how Congress works, how agencies operate, what the information gaps look like. But the team does not include trained qualitative researchers or methodological specialists. The methods had to work more or less out of the box for a team whose expertise was substantive rather than technical. This is a feature of the test case, not a limitation: if the model requires a trained research team to execute, it is not replicable by the institutions that most need it.

No geographic co-location.

The POPVOX Foundation team works remotely, distributed across time zones. The departing federal employees the project was trying to reach were similarly dispersed — while many had been based in Washington, the RIFs and DRPs scattered them across the country. In-person recruitment, which might have been the most natural approach for building trust with a population navigating significant professional disruption, was not available. Everything had to work digitally, asynchronously, and at a distance.

These constraints were not incidental — they were the point. If this model only works with dedicated funding, specialized staff, and physical proximity to participants, it is an interesting research project but not a tool that Congress can actually use. The question being tested was whether it could work without any of those things.

Project Parameters

Coalition Formation and Early Decisions

Departure Dialogues was designed as a coalition endeavor from the start, with the partners described above forming the core group and POPVOX Foundation managing the administrative and operational dimensions. The initial coalition conversations established several parameters that shaped everything that followed.

Nonpartisan framing.

The coalition made an early and deliberate decision that Departure Dialogues would be explicitly nonpartisan. This was partly a natural reflection of the organizations involved — the Partnership for Public Service, the Niskanen Center, and POPVOX Foundation all operate in nonpartisan or cross-partisan modes. But it was also a strategic choice to differentiate the project within a landscape where much of the public conversation about the federal workforce transitions had become deeply polarized. The goal was to produce material that would be credible and useful to Congressional offices regardless of party — and to signal to potential participants that this was not an advocacy exercise but a genuine effort to capture implementation knowledge for legislative use.

Substantive focus.

The coalition was not interested in general sentiment about the workforce transitions. The core research questions were developed through multiple rounds of discussion, stress-testing, and revision among the partners, and were organized around three areas: how Congress could better gather information from current and former federal employees; what barriers those employees experience in communicating implementation realities to the legislative branch; and what specific reforms participants would recommend based on their experience. The questions were designed to elicit the kind of ground-level intelligence about program implementation that the earlier sections of this paper argue Congress systematically lacks — not grievances, not political commentary, but operational knowledge.

Comfort with AI, transparency about it.

The coalition was comfortable using AI-assisted analysis tools — indeed, that capability was central to the case for replicability. But partners flagged early on that other stakeholders, including potential participants and Congressional audiences, might be less familiar or less comfortable with AI-powered synthesis. The group agreed to treat the use of AI tools as a deliberate methodological choice that would be explained openly: what the tools do, how they work, what their limitations are, and where human judgment enters the process. This transparency was both an ethical commitment and a practical one — if the model is to be adopted by Congressional offices, the tools need to be ones that staff can explain and defend.

Tool Selection

With the project parameters established, the team turned to selecting tools that met the constraints: low cost, low technical barrier, asynchronous-friendly, and capable of handling qualitative data at a scale beyond what manual analysis could support.

TheirStory

TheirStory was selected as the primary front end for video and audio contributions. TheirStory is an oral history platform whose testimonial feature presents interview questions on screen and records participants’ responses directly, with no scheduling and no staff facilitation required. A participant could record their contribution from their phone in five minutes, or take longer to work through a fuller set of questions. The platform’s lightweight, self-guided design aligned with a core project goal: someone with one thing Congress should know about their program ought to be able to share it with minimal friction. TheirStory also supported the project’s emphasis on preserving the richness of first-person narrative — tone, emphasis, the texture of how someone describes their experience — rather than reducing everything to written text.

Talk to the City

Talk to the City (T3C), an open-source AI-powered analysis tool developed by the AI Objectives Institute, was selected for synthesis and pattern identification. T3C processes open-ended qualitative responses and uses large language models to organize them into clusters of related ideas, surfacing shared themes across contributions without requiring manual coding. This capability is central to the replicability argument: qualitative analysis at this scale would historically have required dedicated research staff and weeks of manual work. With T3C, a small team can process contributions and surface actionable themes quickly enough to remain relevant to a fast-moving legislative or oversight context. The AI Objectives Institute’s focus on building tools that support democratic deliberation processes also aligned with the project’s institutional values.

Google Forms

Google Forms served as the primary written submission channel — a familiar, zero-cost tool that any participant could access without creating an account or learning a new platform. For participant tracking and project management, the team used the POPVOX Foundation website for intake and Google Sheets as the operational backbone: tracking who had signed up, what participation format they selected, whether they had completed their contribution, and when follow-up was needed. This was not elegant infrastructure, but it was functional, free, and — critically — something that any small team could replicate without specialized tools or IT support.

Integration and Workflow

These tools were not configured to talk to each other out of the box, and integrating them into a coherent workflow was one of the less glamorous but most operationally important dimensions of the project. The end-to-end process worked as follows.

A potential participant would start by completing an intake form on the POPVOX Foundation website (built on Squarespace), which captured basic information about their federal service: whether they were a career civil servant, political appointee, or contractor; whether they had already separated from service and when; and their preferences for how their contributions should be attributed. This intake spreadsheet became the project’s source of truth — the authoritative record of who was eligible, what they had consented to, and how their remarks could be credited.

In the early pilot phase, the team manually reviewed intake submissions for eligibility and then sent eligible participants a link to either the Google Form (for written responses) or the TheirStory platform (for video). This created a deliberate screening step but also introduced friction: every additional email and every delay between signup and participation was an opportunity for dropoff. As the project scaled, the team shifted to providing participation links directly on the post-intake confirmation page, so that someone who had just completed the form could continue immediately into their chosen format. This meant the team was no longer pre-screening for eligibility before participation — someone who turned out to be ineligible (still actively employed, for instance) might complete a submission that could not be used. In practice, this was a manageable trade-off: the volume of ineligible submissions was low, and the reduction in dropoff was significant.

After completing their contribution — whether written or recorded — participants received a thank-you email or were directed to a thank-you page that included additional resources for departing federal employees compiled from coalition partners. For participants who had expressed interest through the intake form but had not yet submitted a contribution, the team sent several follow-up emails over the following weeks. This manual follow-up was time-intensive but important: many potential participants were navigating major life transitions and genuinely intended to contribute but needed a prompt.

When the first interactive report was ready for publication, the team followed up individually with all participants to provide access and to confirm or adjust their attribution preferences. For coalition partners and for the public-facing dataset, all participant information was transferred into a separate, clean spreadsheet that contained only the attributes each participant had explicitly authorized for sharing. This was also the stage at which the team screened responses against the project’s content standards: no personally identifiable information about third parties, no personal attacks, no confidential or sensitive material, no whistleblower disclosures (which require their own legal protections and channels), and no content that was primarily political rather than substantive. In the final phase, participants who expressed interest in a group interview were contacted by email and scheduled through Zoom — a straightforward addition that required no new tooling.

The net result was a workflow that involved a significant amount of hand-sorting and manual email management: tracking who was eligible, who had completed their contribution, who needed follow-up, and who had requested changes to their attribution. The team was aware throughout that portions of this workflow could have been automated — a more integrated intake-to-participation pipeline, automated follow-up sequences, or a participant portal for managing attribution preferences. Given more time or a second iteration, those investments would be worth making. But the volume of participants was manageable enough that manual processes worked, and the manual approach had a significant upside: the most critical data — the intake and submission tracking spreadsheets — remained entirely within the team’s control, with no dependency on third-party platform integrations or automated processes that might introduce errors in sensitive areas like eligibility screening or attribution management. For a proof of concept, flexibility and control mattered more than efficiency.

How It Worked: Narrative Timeline

Phase 0: Friends and Family (Spring 2025)

Before any public launch, the team ran a small-scale test, aiming for approximately five to ten early participants recruited through personal and professional networks. The goal was not data collection but process validation: did the intake flow make sense? Could participants navigate TheirStory without guidance? Were the questions eliciting the kind of substantive, implementation-focused responses the project was designed to capture?

This phase surfaced both useful feedback and instructive mistakes. One participant recorded nine separate videos — one for each question — rather than a single continuous recording, revealing an ambiguity in the instructions that was easy to fix but would have been difficult to spot without testing. Several participants asked detailed questions about anonymity guarantees, prompting the team to sharpen its language about what the project could and could not promise.

That framing became a defining feature of the project’s participant communications. Departure Dialogues was explicit that contributions would be part of a public archive, with Congress as the intended audience. The team would preserve participants’ anonymity to whatever degree they chose — fully identified, agency-only, or completely anonymous — and would share only the identifying information each participant gave permission for. But participants were advised not to share confidential, sensitive, or classified information, and were asked to consider whether they would be comfortable with a Member of Congress from either party reading their contribution in a public forum. The trade-off was intentional: the project’s value to Congress depended on producing findings that could be engaged with openly, not held in confidence.

Phase 1: Launch and Outreach (Summer–Fall 2025)

In parallel with the friends-and-family testing, the coalition built out a communications toolkit for broader outreach: social media graphics, newsletter blurbs, suggested text for email and messaging, and other materials designed to make it easy for partner organizations and individual advocates to share the project within their networks. This toolkit-based approach — equipping partners and allies to do distributed outreach rather than relying on centralized recruitment — proved essential. No single organization had the reach to contact thousands of potential contributors across dozens of agencies, but the coalition’s combined networks, amplified by snowball sharing from early participants, generated substantial coverage in newsletters, on social media (LinkedIn proved particularly effective for this population), and in some media outlets.

With the toolkit ready and the intake process validated, the project moved to formal launch. Outreach flowed from the coalition outward: partner organizations shared through their channels, early participants shared within their professional communities, and the project’s visibility built organically. Once a potential participant completed the intake form on the POPVOX Foundation website, they were directed to their chosen participation format. Those who signed up but had not submitted within a week received a follow-up email — a simple but important step, given that many potential participants were navigating significant personal and professional disruption and may have intended to contribute but lost track.

Relevant Congressional committees and the Office of Management and Budget were notified of the project. The first round of contributions was processed through T3C, and the initial interactive findings report was published in fall 2025. Making that first report public early served a dual purpose: it demonstrated to potential participants what their contributions would look like in practice — how they would be synthesized, presented, and contextualized — and it provided the coalition with a concrete product to point to in continued outreach. Showing rather than describing what the project would produce made subsequent recruitment significantly easier.

Phase 2: Expanded Collection (November 2025–January 2026)

The second phase of data collection launched in November 2025, incorporating lessons from Phase 1 and expanding the available participation formats. The most significant addition was small-group focus groups, a format that emerged organically from participants who preferred a group conversation to a solo recording. Small groups offered several advantages: they allowed participants to build on each other’s observations, often sparking deeper reflection; they provided an additional layer of practical anonymity, since individual comments were embedded in a group discussion rather than standing alone; and they created a space for the kind of collegial exchange that many participants — having recently left agencies where they had worked alongside peers for years — found both comfortable and generative.

In this phase, the team partnered directly with specific agency alumni groups to organize small-group sessions. The Environmental Protection Network, an organization of EPA alumni, was a particularly valuable partner, helping to coordinate sessions with former EPA staff whose programmatic expertise was directly relevant to Congressional oversight priorities. A handful of one-on-one interviews were also conducted for participants who wanted to contribute but were experiencing technical difficulties with the self-guided platforms.

Outreach continued through the holiday period, and submissions closed in January 2026. The complete dataset was processed through T3C for the final analysis, with findings published in the companion report.

Results

Fifty individuals participated in Departure Dialogues through a mix of formats: 27 submitted responses via Google Form, 11 took part in small group interviews, 9 contributed through a “Their Story” video format, and 3 participated in one-on-one interviews. Participants represented 15 agencies across the executive branch, with the heaviest concentration from USAID (9 participants) and HHS and its sub-agencies — including FDA, NIH, HRSA, and SAMHSA — (9 participants combined), followed by EPA (6) and the State Department (3). Other agencies represented included GSA, DHS, the Department of Education, USDA, IRS, and several others, reflecting meaningful breadth across both domestic and foreign policy functions.

Among those who listed their roles, participants held positions spanning a wide range of seniority and function — from program analysts and specialists to directors, deputy directors, senior advisors, and a vice chairman. Roles included technical and scientific positions (Physical Scientist, NEPA Specialist, Clinical Reviewer), operational and administrative functions (Grants Management Specialist, Transportation Specialist, HR Supervisor), and senior leadership (Assistant Administrator, Chief Well-being Officer). Many participants held Foreign Service or internationally-focused roles, consistent with the strong USAID and State Department representation.

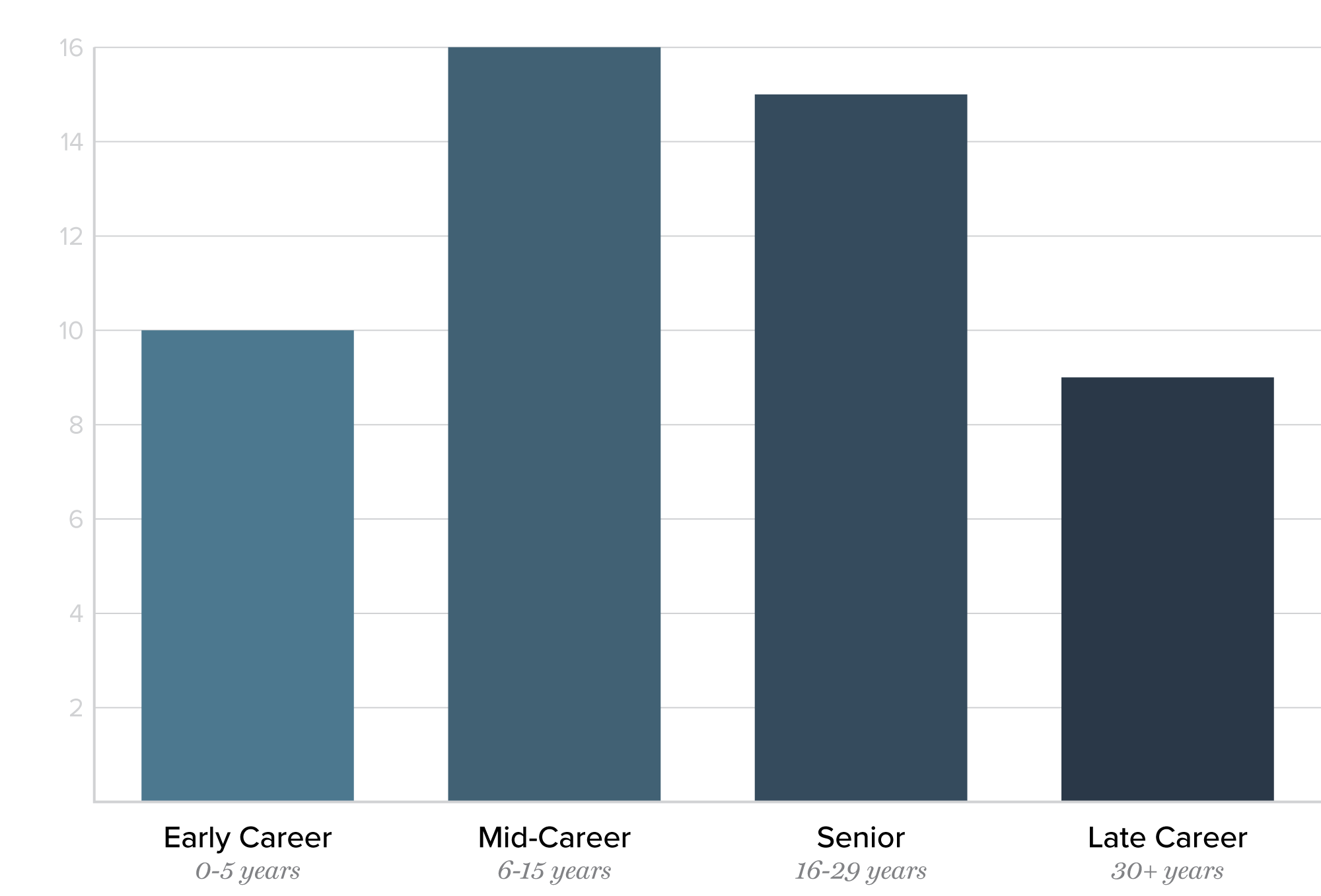

The participant pool skewed toward experience. Total government service ranged from under one year to 40 years, with an average of 15 years overall and 14.3 years at participants’ most recent agency. Participants fell into four broad seniority tiers: 10 were early-career (0–5 years), 16 were mid-career (6–15 years), 15 were senior (16–29 years), and 9 had served 30 or more years. Nearly half of all participants had 16 or more years of service, and the 9 longest-serving participants each brought three decades or more of institutional experience. This seniority profile suggests that while the proof of concept drew a relatively small sample, it reached people with deep firsthand knowledge of how federal agencies function — and, in many cases, how they have changed over time.

Quick Stats

Total participants: 50

Format: 11 participated in small group interviews, 3 one on one interviews, 9 through their story video, 27 through Google Forms

Length of Service

Average length of service: 15 years

Longest tenure: 40 years

Shortest tenure: ≈ 10 months

Agency

Participants came from 23 different agencies:

USAID: 9

HHS (including FDA, NIH, HHS/HRSA, and SAMHSA): 9

EPA: 6

State Dept.: 3

GSA (including 18F): 3

DHS (including FEMA): 3

IRS: 2

USDA (incl Forest Service): 2

DOJ (Office of Violence Against Women): 1

Dept. of Veteran Affairs: 1

ICE: 1

Dept. of Education: 1

Dept. of Energy: 1

National Science Foundation: 1

Merit Systems Protection Board: 1

Observations & Lessons for Future Implementers

The following observations are offered for legislative offices, committees, or other organizations considering a similar approach. The model is not specific to former federal employees — it could be applied to teachers, veterans, small business owners, disaster survivors, or any population with direct experience relevant to a legislative or oversight question.

Distribution networks are the binding constraint — and the trust mechanism.

Reach was a direct function of the networks the coalition could activate. No amount of methodological rigor matters if the people you want to hear from never learn the project exists. But distribution was not only a logistical challenge — it was a trust challenge. Departure Dialogues asked people to share candid reflections about their professional experience during a period of significant personal disruption, in a political environment charged with suspicion about how such reflections might be used. This was a high-trust activity in a low-trust environment, and the willingness of participants to engage is something the team does not take for granted.

What made that trust possible was not primarily the project’s privacy protocols or institutional neutrality, though both mattered. It was the credibility of the organizations doing the outreach. When the Partnership for Public Service or Civil Service Strong shared the project through their channels, that carried a signal that the project had been vetted by organizations that departing federal employees already trusted. Coalition partners were not just amplifiers; they were trust proxies. For anyone replicating this model, the implication is clear: identify who you want to hear from, and then identify who those people already trust, before finalizing the research design.

For Congressional and legislative entities specifically, this suggests treating a structured input process less as an internal data-gathering exercise and more as an opportunity to engage stakeholder groups as partners in the process itself. There is precedent for this. In 2019, the House Natural Resources Committee, led by Chair Raúl Grijalva, used the POPVOX platform to develop the Environmental Justice for All Act through an open, collaborative process — soliciting input from environmental justice communities, advocacy organizations, and individual constituents at every stage from initial principles through draft bill text. The committee’s outreach director described the platform as “an equalizer” — participants did not need a federal lobbyist to be part of the process.

That model demonstrated that when a committee invests in distribution and partnership with the communities most affected by a piece of legislation, the result is not just better data but deeper buy-in: the resulting bill reflected priorities that would not have surfaced through traditional channels, and the stakeholder groups that participated became invested in its success. Departure Dialogues operated at a different scale and for a different purpose, but the underlying principle is the same: distribution networks are not just how you reach people — they are how you earn the right to ask.

Match the format to the question, and be explicit about what the model can and cannot do.

Departure Dialogues used multiple participation formats, and the most important lesson was that each serves a different purpose — and that being deliberate about the match matters more than offering every option from the start.

Written and video submissions through Google Forms and TheirStory were scalable and asynchronous: a participant could contribute from their phone in five minutes, on their own schedule, with no staff involvement. These formats were well suited to early-stage theme identification — casting a wide net, surfacing the broad contours of what departing employees wanted Congress to know. But they were one-directional. A written response cannot be probed; a recorded video cannot be asked a follow-up question.

Small-group focus groups and one-on-one interviews added depth at the cost of staff time. They allowed participants to build on each other’s observations, enabled the kind of follow-up questioning that surfaces nuance, and — in the case of small groups — created a space for collegial exchange that many participants found both comfortable and generative. But each session required scheduling, facilitation, and post-session processing that asynchronous formats did not.

The practical recommendation is to start asynchronously. Use scalable formats to identify themes and build initial participation, then deploy interactive formats selectively to go deeper on the issues that emerge. This sequencing also builds trust: participants who have already seen the project’s first report — who know what happens to their contributions — are more willing to invest the time in a longer, more personal conversation.

Equally important is clarity about what this kind of model can and cannot support. A lightweight qualitative input process is well suited for agenda-setting, early-stage information gathering, and informing lines of inquiry. It is not designed to carry the evidentiary weight of a formal oversight proceeding. The participation is self-selected rather than representative. Departure Dialogues did not verify participants’ identities — which was appropriate for a proof of concept, but would need to be designed in for higher-stakes contexts where credibility depends on confirmed attribution.

For any future implementation, the team should be deliberate about where the project sits on the spectrum between exploratory input and formal evidence, and should set expectations with participants, partners, and Congressional audiences accordingly. The friction that verification adds is real, but so is the credibility it provides — and the right balance depends entirely on the intended use.

The demand is real, and the value extends beyond the dataset.

One of the project’s most striking findings was not substantive but structural: there is enormous unmet demand for structured channels through which knowledgeable Americans can contribute to policy processes. Participants did not need to be persuaded to engage. They engaged readily, substantively, and with a level of candor and specificity that reflected genuine investment in helping Congress understand how federal programs actually function. The bottleneck was not willingness, but infrastructure. Legislative entities considering a similar approach should not assume that generating participation will be the hard part. Designing for accessibility and trust usually is.

Perhaps more unexpectedly, the project generated value that extended well beyond the dataset itself. Two participants who connected through Departure Dialogues went on to form “We the Doers,” a nonprofit organization of former senior civil servants focused on improving government effectiveness. The project had been designed as a data collection mechanism, but it became, in practice, a catalyst for community formation and sustained civic engagement. People who had recently lost their professional community found, through the act of contributing to a shared project, the beginnings of a new one. This was not an intended outcome, but in retrospect it was not accidental either: any process that asks people to reflect on their expertise and share it with a purpose larger than themselves creates conditions for connection. Future implementations should design for community, not just contribution — recognizing that the network of participants may ultimately be as valuable as what they submit.

Where This Model Fits in the Policy Cycle

The standard policy cycle model — through which issues are identified, policy is shaped, programs are implemented, and outcomes are assessed — offers a useful framework for thinking about where a model like Departure Dialogues adds value. Congress sits at the center of this cycle in a distinct way, both authorizing policy and overseeing its execution. The model fits some stages better than others.

Agenda setting.

This is where the model has its greatest comparative advantage. Frontline practitioners and affected stakeholders often see problems long before they appear in formal reports. A small team can quickly stand up a participation mechanism, surface themes with AI-assisted analysis, and produce a summary of emerging issues in weeks — giving Congress an accessible channel for early signals that formal oversight mechanisms have not yet captured.

Formulation.

As Congress moves from identifying issues to shaping responses, practitioner input becomes more specific and more valuable. A structured input process can surface what existing language creates unanticipated burdens, what approaches are working elsewhere, and how a proposed policy would affect different populations — all in a form directly useful to staff drafting legislation.

Implementation.

This is a critical feedback point. A lightweight input model can capture early signals from those implementing a law — what is working, what is breaking down, what adjustments would help — before problems become entrenched. Departure Dialogues applied this at a specific transition moment; a sustained version could make this feedback continuous rather than episodic.

Reauthorization.

Over time, implementation realities drift from legislative intent — and the frontline knowledge needed to close that gap walks out with every departure. This model can help maintain an ongoing connection to program implementation between reauthorization cycles, building institutional memory at relatively low cost. When reauthorization arrives, structured input from those who implemented the original legislation is among the most valuable inputs drafters can have.

The model has less direct application at the adoption stage, where legislative decisions involve deliberation and votes that qualitative input cannot substitute for — though structured input gathered earlier can inform testimony and provide members with concrete, constituent-grounded evidence to draw on during debate. Similarly, formal evaluation typically requires more rigorous methodology than this model provides, but it can play a supporting role: surfacing questions that formal evaluation should prioritize, identifying unanticipated effects, and gathering the practitioner perspectives that give quantitative findings their interpretive context.

A note on fit.

This model is a complement to formal processes, not a substitute. When findings need to carry evidentiary weight, the lack of verification, self-selected participation, and qualitative nature of the data are real constraints. Its strength is in the earlier and softer stages of the cycle: surfacing issues, informing questions, and maintaining feedback loops that would otherwise go dark.

Conclusion

The specific findings from Departure Dialogues — what departing federal employees shared about how Congress can better understand the programs it authorizes — are addressed in the companion findings report. The focus of this paper has been the process: how a small team with limited resources built a model for capturing qualitative stakeholder input, what worked, what required adaptation, and what the model can teach institutions considering similar approaches.

But there is one final observation that belongs here rather than in the findings report, because it is about the method itself and what it does to the teams that undertake it.

Earlier in this paper, qualitative researcher Chelsea Mauldin’s argument was invoked: that policy institutions have a persistent bias toward quantitative metrics — not because they are more meaningful, but because they are easier to produce and process. That bias has consequences. It shapes what Congress pays attention to, what it asks, and what it believes it knows. And it quietly devalues the richest source of intelligence available to a legislature: the textured, contextual, experiential knowledge that comes from the people closest to how policy actually works.

Designing and running a process like Departure Dialogues changes that default. It forces a team to think concretely about what qualitative insight is, where it comes from, how to solicit it without leading it, and how to make it legible to an institution that is not accustomed to receiving it. It requires deliberate choices about where in the policy cycle this kind of input is most valuable — and honest reckoning with where it is not. It builds, through practice, the institutional muscles that Mauldin describes: the capacity for non-linear synthesis, for sitting with complexity, for finding the unexpected connections between what one person said and what another person said that neither of them would have identified on their own.

Those muscles matter beyond any single project. The pacing problem that motivates this work — Congress’s difficulty in keeping up with the speed and complexity of the challenges it faces — is not going to be solved by any one tool or method. But it can be addressed, incrementally, by building the habit of seeking out qualitative intelligence from the people who have it, developing the infrastructure to process it, and creating the institutional expectation that this kind of input is not a supplement to the real work of legislating but a core part of it. If Departure Dialogues demonstrates anything, it is that the barriers to doing this are lower than most people assume — and that the value, both in what is learned and in what the process itself teaches, is higher.

Acknowledgments

This project reflects a shared belief — across organizations that don’t always agree — that preserving institutional knowledge from departing federal employees is work that transcends any singular political moment. Capturing that knowledge and strengthening the feedback loop between the Executive branch and Congress requires a collaborative, nonpartisan effort, and we are grateful to the partners who brought that commitment to this work.

We are grateful to our coalition partners — the Partnership for Public Service, the Niskanen Center, the Foundation for American Innovation, and Civil Service Strong — for their early support, organizational expertise, dedication to a better federal government, and shared conviction that this moment called for new approaches to old problems.

The Departure Dialogues methodology depended on technology that made large-scale qualitative listening possible. The AI Objectives Institute and the Talk to the City (T3C) team brought both technical sophistication and genuine care for the integrity of participant voices to the work of synthesizing responses at scale. TheirStory provided the platform through which participants shared their experiences, offering an accessible and thoughtful interface for what was, for many contributors, a meaningful act of reflection.

Outreach for this project was only possible because organizations with deep roots in the federal workforce community opened their networks and lent their trust to this effort. We thank the Environmental Protection Network, the 18F alumni community, the American Federation of Government Employees (AFGE), the National Treasury Employees Union (NTEU), the National Association of Retired Federal Employees (NARFE), the Federal Legal Defense Fund, FedFam, Humans of Public Service, and WellFed for helping us reach the people whose voices are at the heart of this report.

We are grateful to the journalists who helped bring Departure Dialogues to a wider audience. Jason Briefel of FedManager was among the first to cover the project, and his early write-up helped us reach participants we might not otherwise have found. Sean Newhouse of Federal News Network provided thoughtful coverage of the project’s goals and methodology, and Terry Gerton of Federal Drive gave us the opportunity to share the work with the Federal Drive’s audience at a critical moment in our recruitment. Their interest in — and enthusiasm for — the work of departing federal employees made a real difference.

Above all, we are grateful to the federal employees who took the time to participate. This project exists because of them.

¹ Additional operational parallels to Congressional offices include compressed timelines analogous to funding and election cycles; generalist staff covering multiple issue areas; remote coordination across time zones; limited budget for external tools; and reliance on coalition networks rather than formal institutional research infrastructure.