From Use Cases to Institutional Choices

What the last day of the Association of Secretaries General of Parliaments (ASGP) debate at the 152nd IPU Assembly in Turkey revealed about how parliaments are organizing around AI

BY BEATRIZ REY

On the final day of the 152nd Inter-Parliamentary Union (IPU) Assembly, the Association of Secretaries General of Parliaments (ASGP) turned to a question that increasingly structures parliamentary reform: how legislatures are adopting AI.

The discussion opened with a framing that stayed with me. Andy Richardson, IPU’s Center for Innovation in Parliaments’ Program Manager, noted that, so far, “most parliaments are adopting AI primarily to generate efficiency gains in the legislative process.” It is a straightforward and revealing observation that tells us where parliaments are starting from.

What followed, however, suggested that this is only part of a broader trajectory. As Silke Albin, Deputy Secretary General of the German Bundestag put it, the real shift ahead is moving from “AI use case introduction” to “AI transformation.” That distinction ran through the entire debate. The issue is no longer whether parliaments are experimenting with AI but whether those experiments are adding up to something coherent.

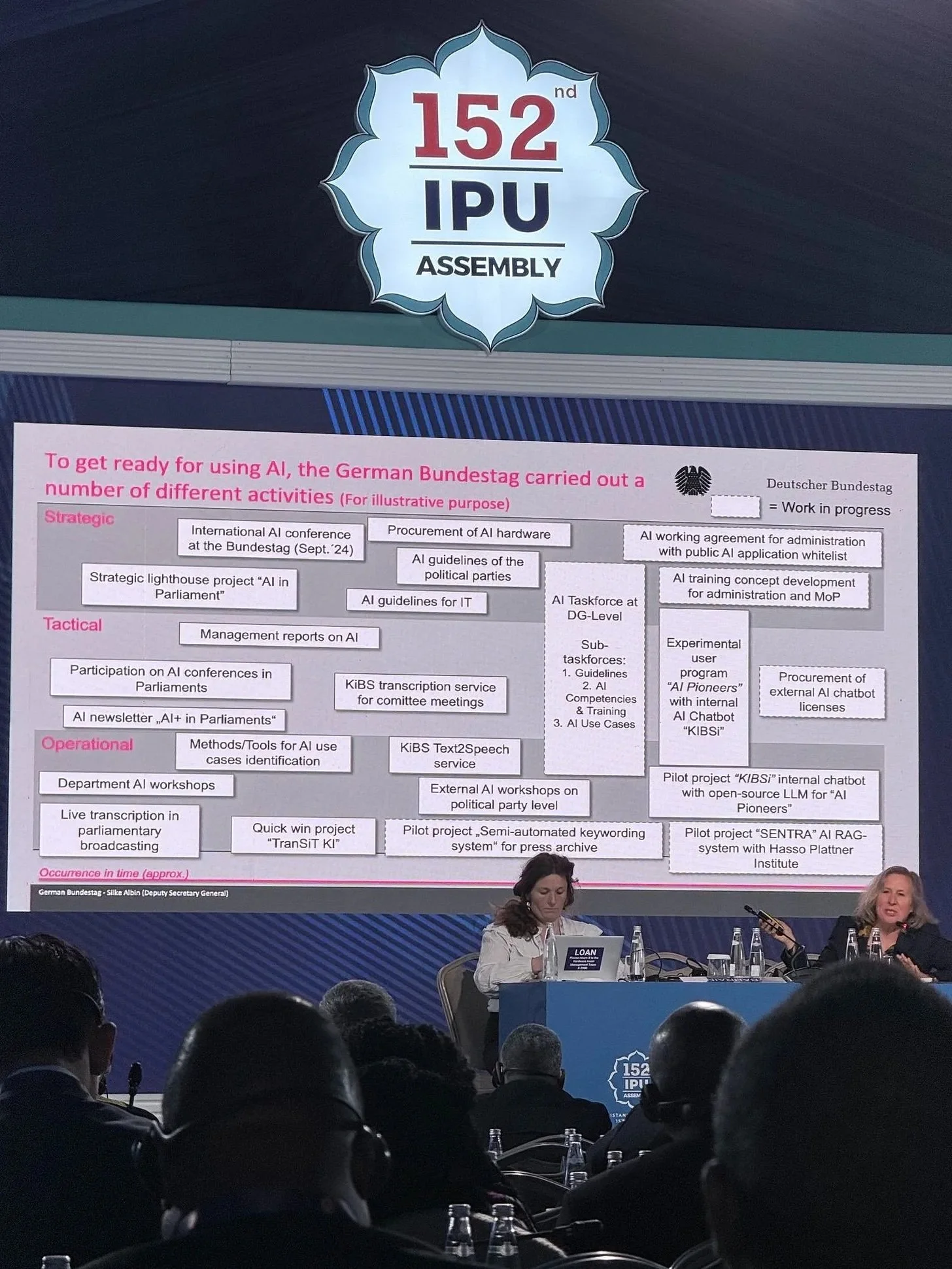

The German Bundestag case is a useful place to start precisely because of its caution. Rather than moving directly to large-scale deployment, the Bundestag has approached AI as a layered process. As Silke explained, their work can be understood across strategic, tactical, and operational levels. The image below indicates examples of each layer and overlaps.

At the operational level, AI is already embedded in core workflows: automated transcription in committees, text-to-speech applications for internal communication, and AI-supported subtitling in plenary broadcasts. At the same time, programs such as “AI Pioneers” create structured spaces for experimentation across the administration, generating use cases while also building institutional familiarity. Pilot projects, such as the SENTRA search system or internal chatbot testing, add another layer, allowing the Bundestag to test technologies under controlled conditions before wider rollout. Surrounding all of this is a deliberate investment in capacity: training programs, internal guidance, and tools like the “AI User’s Toolbox.”

What this careful approach makes visible is a sequencing logic: experimentation, testing, deployment, and training are not separate tracks, but part of a single institutional process.

The UK Parliament case, presented by Sarah Davies, Clerk Assistant of the UK House of Commons, surfaces the same issue from the opposite direction. Instead of layering, the risk here is fragmentation. AI initiatives are emerging through multiple pipelines: dedicated projects, digital value streams, savings programs, and bottom-up “citizen development.” The problem is not a lack of activity, but too many parallel tracks that do not necessarily connect.

Davies’ intervention sharpened this point by focusing on governance and evaluation. Governance, in her framing, is about how these pipelines connect, that is, how projects move through decision-making structures and align with existing priorities. Evaluation, meanwhile, addresses a subtler issue: the same AI project can generate entirely different interpretations of value depending on who is looking at it. Without a shared understanding of the problem being solved, outcomes fragment just as much as pipelines do.

Her concrete examples made this tension visible.

Using semantic search, a pilot in the Table Office helped manage a surge in written parliamentary questions — rising from around 300 to over 500 per day — by identifying duplicates more efficiently. But attempts to automate editing proved less successful, forcing teams to recalibrate expectations. At the same time, work with Hansard and Parliamentary Digital Services showed more promise, particularly in improving access to parliamentary data.

In automatic speech recognition, the focus is not just on efficiency but on reallocating resources. Trials aim to replace re-speaking in British Sign Language subtitling, potentially freeing up funds to expand services elsewhere. Parallel efforts are exploring how AI can support Hansard transcription while preserving parliamentary standards.

In summarizing and drafting, pilots in committee work in the House of Lords show how AI can help process large volumes of written evidence. But these are being accompanied by guidance and training, reflecting concerns about bias and reliability.

And in the case of Copilot and agent-based tools, the limits of adoption become particularly clear. While some applications, such as research assistants or HR query tools, show promise, others have run into issues around data access, cost, and safety filters. In one case, an MP was blocked from drafting a newsletter due to content restrictions. The result has been a more cautious, phased rollout.

If the presentations laid out these experiences, the discussion that followed revealed where the real friction lies.

One intervention from Turkey, for instance, pointed to the importance of where experimentation happens. Their experience with a domestically developed transcription tool (capable of working offline and resilient to cyber risks) highlighted familiar challenges: multiple speakers, accents, background noise. Davies’ response was telling: committee settings often provide a more controlled environment to test these tools before scaling them to plenary sessions.

Questions from Canada and others shifted the focus to data security, but quickly exposed a deeper issue. As Davies noted, the risk is not only what AI tools do, but how parliamentary information is stored and shared. Using tools like Copilot to search emails, for example, can surface underlying problems in information management: shared inboxes, unclear permissions, documents edited by multiple users. This suggests information governance teams must be involved from the start, not brought in later.

Finally, questions about digital sovereignty and external partnerships (raised by Portugal and Bahrain) highlighted another dimension. The UK Parliament’s early decision to adopt cloud infrastructure and its reliance on external providers shape both what is possible and how risks are managed. Davies described this as a “mixed economy,” combining internal capacity with external expertise, but emphasized that this makes evaluation and training even more critical.

Taken together, the debate points to a shift that is already underway. The challenge is no longer identifying AI use cases — those are everywhere. The challenge is deciding how to organize around them: how to align pipelines, structure experimentation, manage information, and build capacity. Or, as Silke Albin put it, “it’s only when we shift from ‘AI use case introduction’ to ‘AI transformation’ that it becomes clear these changes must be reflected in processes, competencies, and management itself.”

Modern Parliament (“ModParl”) is a newsletter from POPVOX Foundation that provides insights into the evolution of legislative institutions worldwide. Learn more and subscribe at modparl.substack.com.