Keeping Pace

Keeping Pace is POPVOX Foundation’s monthly newsletter helping the Congressional workforce learn and adopt modern technology so every staffer is empowered to innovate. Each issue delivers practical, nonpartisan AI and technology education with no vendor bias, no policy asks, and no partisan agenda. Learn more and subscribe here.

Practical, nonpartisan AI and technology education for Congressional Members and staff

This is the first issue of Keeping Pace, formerly Future-Proofing Congress. POPVOX Foundation’s mission is to help Congress close the gap between how fast technology is moving and how well institutions understand it. We call this the Pacing Problem. Keeping Pace will hit inboxes monthly, giving congressional and legislative staff practical, nonpartisan AI and technology education: no vendor bias, no asks, no politics.

Newsletter length: This is an 8-minute read.

LETTER FROM THE EDITOR

In the ten days between April 7 and April 17, Anthropic announced three distinct products: Claude Mythos Preview, Claude Opus 4.7, and Claude Design. The next week, Open AI released GPT 5.5 and Images 2.0. And on April 24, China’s DeepSeek released its V4 model that it claims “closes the gap” with US frontier models. It was both a whirlwind week for AI and increasingly normal, as the pace is not slowing down.

AI is not one tool. It is a toolbox: a growing, evolving suite of technologies that serve different purposes and can assist in different ways. New capabilities are being added faster than most people can track, and that pace is being felt in every industry and workplace, including Congress. Understanding these tools is not a responsibility limited to staff with technology portfolios. Every Member and staffer who engages with these tools helps Congress keep up.

That is what this newsletter is for. Each month, we cover what is cutting edge in the AI world, explain how that translates into the Congressional setting, and give you tips for continuing your AI use and comfort level. No policy positions, no vendor pitches, no asks. Just context to help you stay in the conversation.

This month, we’re looking at:

What AI agents actually are, and why your office will be affected even if you never use one

Why Claude Mythos and Project Glasswing should be on your radar

Two prompting tips and a tool to counter deepfake impersonators

Some additional reports, AI tools, and items that caught our attention

As a former Congressional staffer who has spent the last three years learning AI tools and bringing those lessons back to the Hill, I am looking forward to continuing that work together.

Aubrey Wilson

Managing Director

POPVOX Foundation

TECH WATCH

AI agents are here

An AI agent is software that can be assigned a task or entire workflow and execute it autonomously, without a human in the loop at every step. By contrast, AI chatbots (ChatGPT, Claude, Gemini) require ongoing human engagement. Agents are a different category of AI tool entirely, and increasingly common.

Agents generally fit into two buckets at this time. The first are embedded agents, the kind already in your tools: Microsoft Copilot in Word or Outlook, for example. These help with one task at a time, require you at the keyboard for each step, and generally stay inside the application you opened. The second are autonomous agents, which can be assigned an entire workflow and execute it without you present: monitor a docket and alert on new filings, manage an inbox and send responses, research a topic across dozens of sources (without prompting) and produce a memo.

No parliamentary institution (including the House and Senate) has formally approved agents for internal use. But that does not mean agents are not already in your environment. Lobbyists, advocacy organizations, and other outside actors are already using agentic tools to streamline their engagement with Congress. Constituent outreach, policy monitoring, and legislative tracking are increasingly being performed by agents on behalf of users. Knowing what agents can do may help you and your office assess incoming mail, perform informed oversight of future federal AI programs, and explore internal use cases.

POPVOX Foundation’s plain-language explainer covers what AI agents are, context on the technology’s evolution, and more.

NOTEWORTHY NEWS

Mythos, Project Glasswing, and Congress (?)

On April 7, Anthropic announced Claude Mythos Preview. It is a frontier AI model designed for general use that also surpassed all prior models in its ability to identify and exploit vulnerabilities in IT infrastructure. When Anthropic used it to audit widely used software, Mythos found thousands of high-severity vulnerabilities across all major operating systems and web browsers.

Because of that power, Anthropic is not releasing Mythos publicly (at this time). Instead, they announced Project Glasswing: an invitation-only initiative bringing together more than forty organizations that build or maintain critical IT infrastructure (including Amazon Web Services, Apple, Google, Microsoft, and JPMorganChase) to scan and secure essential systems ahead of a broader proliferation of AI-enabled security threats.

Congress’s IT environment is essential infrastructure and one of the most targeted cybersecurity environments in the world. To our knowledge, Congress is not part of Project Glasswing, but the Executive branch is.

Pentagon employees built 100,000 AI agents in five weeks

Defense Department personnel have used a version of Google Gemini’s Agent Designer on the department’s GenAI.mil platform to create more than 103,000 semi-autonomous AI agents since late March. The tool requires no coding knowledge; personnel describe what they want in plain language and the system builds it. The agents are authorized for unclassified use and handle tasks like drafting after-action reports, analyzing imagery, reviewing official strategy documents, and processing financial data.

SKILLS HUB

A low-tech way to protect your office from voice deepfakes

AI voice cloning has made it easier to impersonate Members, chiefs of staff, and senior staff by phone. A staffer could receive an urgent call that sounds exactly like someone they trust, with no easy way to verify it in the moment. Members of both the House and Senate have already experienced this type of impersonation.

The National Cybersecurity Alliance recommends a simple countermeasure: establish a pre-agreed code word or phrase known only to your trusted inner circle. Use it to verify identity any time something feels off: an unusual financial request, an urgent ask to share sensitive information, anything that does not quite add up. If a call feels suspicious, hang up and dial back using a number stored in your contacts, even if the caller urges you to stay on the line.

Two prompting habits worth building now

The best way to get sharper on AI is to use the tools available to you. Before you start, make sure you know your institution’s official guidance. Check the HouseNet AI page, the Senate Sergeant at Arms portal, or POPVOX Foundation’s tracker. Then confirm your office’s internal policy with your chief of staff (or take the lead in helping create one).

Once you know what tools are allowed for use, choose one to experiment with along with these tips:

1. Give the LLM context about who you are.

The tool does not know where you work, who you represent, or why you are asking your prompt. Providing that context — even briefly — may produce meaningfully better answers. Try this comparison: open your preferred LLM and run these two prompts in separate chats.

Prompt A: What does the acronym AGI stand for and what should I know about it?

Prompt B: I’m a congressional staffer working for a Member of Congress representing a district in Iowa. In a recent stakeholder meeting with a healthcare company, the term AGI came up. What does it stand for and what should I know about it as a healthcare LA?

The difference in the responses will be noticeable.

2. For complex prompts, repeat the key instruction.

When you are asking an LLM to do something multi-stepped or nuanced, research suggests that repeating or reinforcing your core instruction can improve performance. A simple way to try this: once you have written a complicated prompt, copy and paste it a second time in the same message before you submit. The repetition signals to the model that this instruction matters and is worth attending to carefully.

For more tips see this easy prompting guide and other resources customized for congressional use cases at popvox.org/ai.

AI USE INSPIRATION

Departure Dialogues interactive reports

The Departure Dialogues Project is a working proof of concept for how Congressional committees could conduct large-scale stakeholder research at low cost. In early 2025, thousands of career civil servants left the federal government, taking years of implementation knowledge with them. POPVOX Foundation and four partner organizations built a structured pathway to capture it and dozens of submissions were collected. An AI-assisted analysis tool called Talk to the City turned those responses into three interactive reports covering federal modernization, health and environment agencies, and international programs. Each report lets you move between clusters of ideas, read the quotes behind each theme, and trace patterns across agencies and tenures.

Communications Director vibe codes AI floor tracker website

Zack Florman, communications director for Rep. Laura Friedman [D, CA], wanted an easier way to stay informed about floor schedule updates. With no coding background, on his own time, using a personal Gemini subscription he built the tool he had been looking for. The Capitol Wire is an AI bot (transformed into a website) that monitors the House Clerk’s and Majority Leader’s websites in real time, alerts staff the moment an update posts, and generates bill summaries. AI has lowered the barrier to building bespoke tools and The Capitol Wire is an example of how AI can empower staff to solve every-day workflow issues in new, impactful ways. Read the full Roll Call piece about Zach and The Capitol Wire here.

CAUGHT OUR EYE

Stanford HAI’s 2026 AI Index

The Stanford Human-Centered AI Institute released its annual data-driven snapshot of AI adoption, investment, and challenges globally. A useful reference for staff fielding constituent questions or preparing for hearings.

Lessons from the 152nd IPU Assembly

In Turkey this month, secretaries general from the German Bundestag and UK House of Commons compared notes on institutional AI adoption. POPVOX Foundation Fellow Beatriz Rey’s full recap is in the ModParl newsletter.

AI moves into design: Claude Design and ChatGPT Images 2.0

On April 17, Anthropic released Claude Design, a tool for creating prototypes, slides, and visual assets through conversation, powered by Claude Opus 4.7. Five days later, OpenAI released ChatGPT Images 2.0. Both products signal a significant expansion beyond language models into the user experience and visual design space, putting AI companies in direct competition with Adobe, Figma, and Canva. Worth watching for offices that produce public-facing communications.

OpenAI on AI governance

OpenAI published a policy report, Industrial Policy for the Intelligence Age, framing the pacing problem: institutions are falling behind in governing rapidly evolving AI technology. Worth a read for anyone tracking how major AI developers are engaging with policymakers.

C-TECH on AEI’s Understanding Congress

I joined AEI Senior Fellow Dr. Kevin Kosar to discuss the case for a Congressional Capacity and Technology Office. The conversation covers what C-TECH would do, how it differs from existing congressional support agencies, and why current AI training gaps are a structural problem rather than an individual one.

80,000+ real AI conversations

In December 2025, nearly 81,000 people across 159 countries and 70 languages had a structured conversation with an AI interviewer about their hopes, fears, and daily use of these tools. Interesting on its own; also a concrete example of how AI-enabled constituent engagement could work at scale.

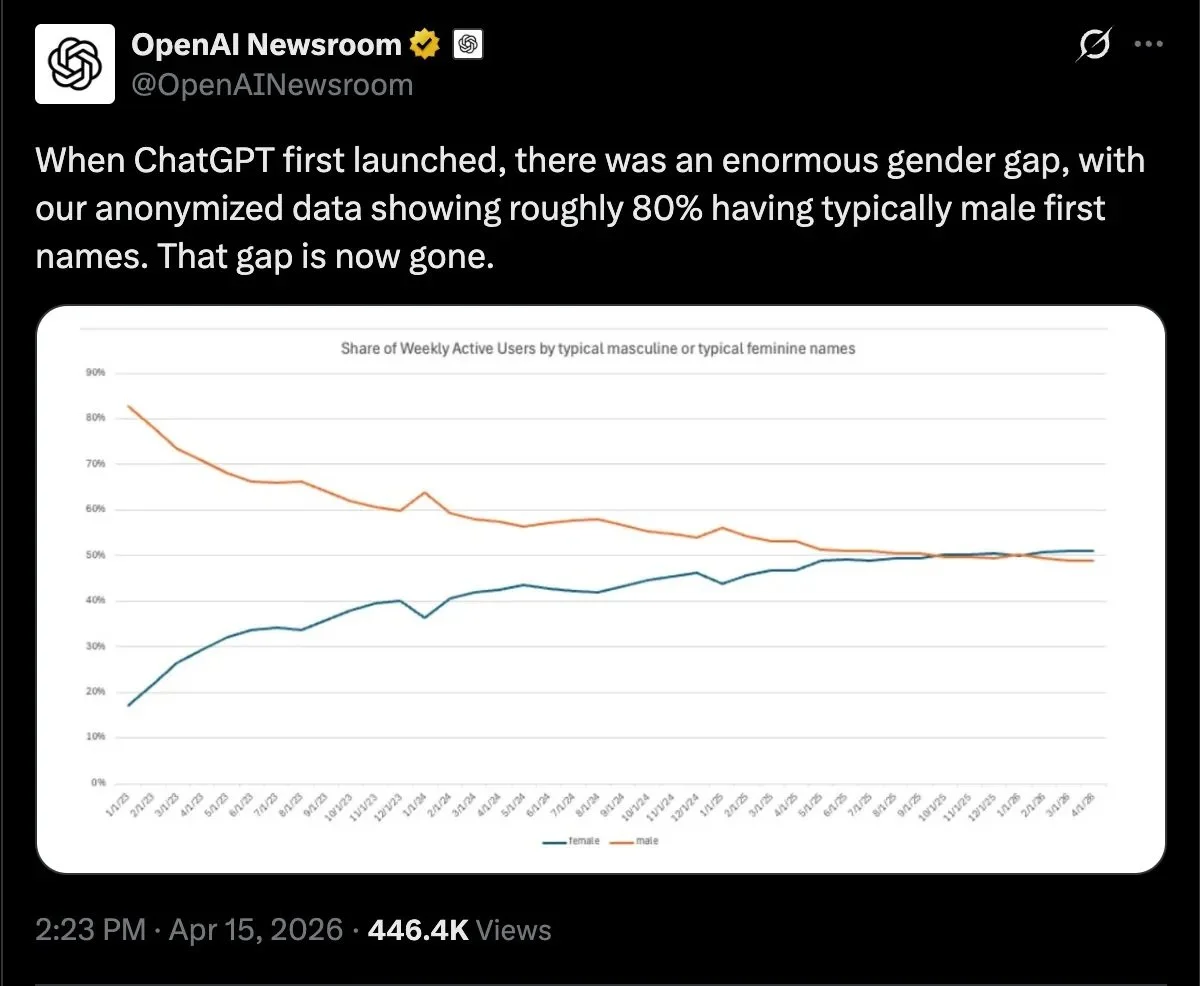

The gender gap in AI use is closing

OpenAI’s anonymized usage data shows that the large gender gap present at ChatGPT’s launch in 2023 has now closed.

About POPVOX Foundation

POPVOX Foundation is a nonpartisan nonprofit that helps democratic institutions keep pace with a rapidly changing world. Through publications, events, prototypes and technical assistance, the organization helps public servants and elected officials better serve their constituents and make better policy.